It happened again. I got caught in the black hole of web-surfing and the downward trundling of “oh, what‘s that? That sounds interesting,” which led to a 4-hour adventure into interesting concepts and underlying psychologic phenomena. Enjoy!

Let‘s start with a thought-provoking idea.

Have you ever considered how expectations or targets might influence our motives, decision-making, and/or actions?

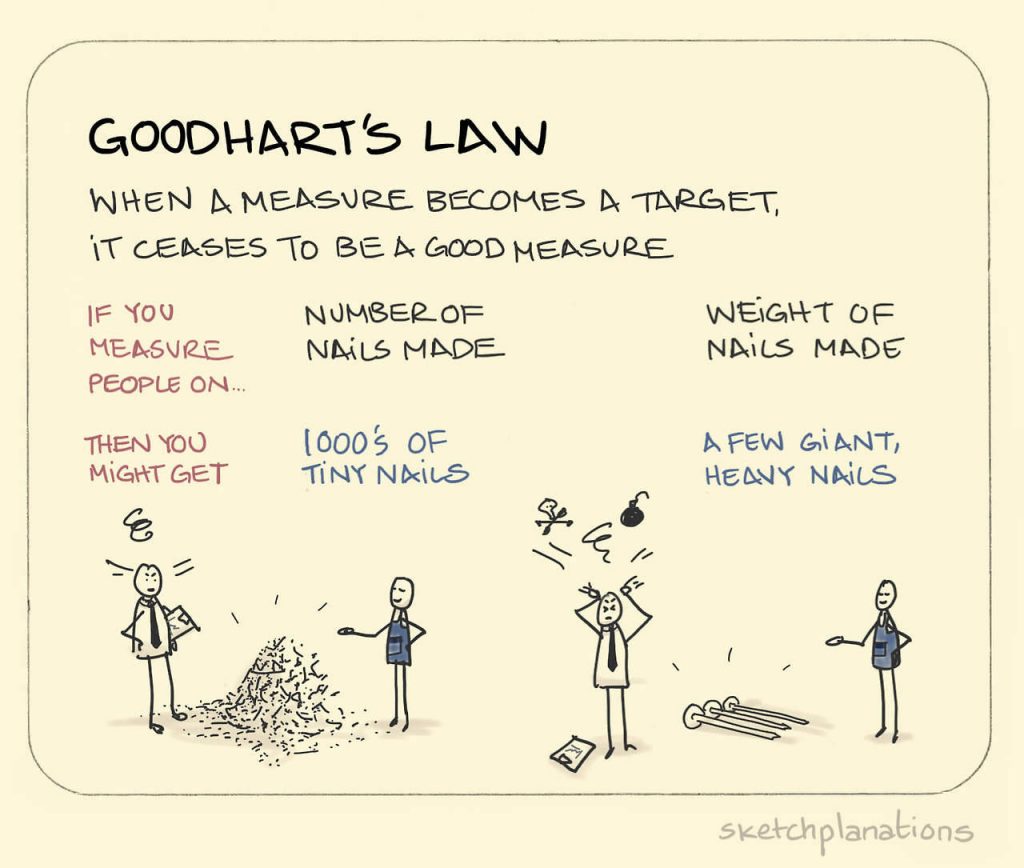

Well, Goodhart‘s Law is exactly that: “When a measure becomes a target, it ceases to be a good measure.” It‘s an interesting question that proposes a bit of a paradox. If the test itself becomes a training tool, then are you simply training to the test and does the test actually still work?It‘s important, at least initially, to put this into context so we can better comprehend it.

Let‘s discuss this in terms of physical therapy and rehabilitation or return to play readiness. At this point in time, it is well agreed upon that functional testing is a necessary part in the profile of an injured athlete. Functional Testing helps identify underlying and residual deficits, while also compiling data to be used in cross- referencing testing scores against other normative data points related to their age, sex, or sport.

Let’s look at hop testing as an example. While hop testing is utilized as a functional test to identify impairments of strength, control, and movement strategy, it is also typically used as part of the rehabilitation process as an intervention. This is a bit of a dilemma. Hop testing, from a quantitative standpoint, compares the absolute distance of the hop to the non-injured limb. While some clinicians will evaluate for quality of movement, reported data is based on the distance achieved compared to the other limb. Thus the athlete, typically knowing what the test is designed to measure, has been trained towards the test and knows the criteria by which they are being measured, which potentially biases their performance or strategy for completing the test. A beautiful example of Goodhart’s Law in full effect.

If the athlete knows the rules, then the test will not work. All the athlete must do is jump a shorter distance on the non-injured limb (a distance achievable by their injured limb) and practice landing with good control, and POOF, they achieve “Limb Symmetry and Good Quality Scores.” Does that really measure what we want? I would assume not!

This is an unintended consequence of a rightfully intentioned process.

Further down the inter-web exploration rabbit hole the Cobra Effect enters the chat.

The Cobra effect “occurs when an attempted solution to a problem makes the problem worse, as a type of unintended consequence.” This concept is bred from an anecdote about a cobra problem in Delhi, India. Shortened for the sake of time, the British (ruling India at the time) set up a bounty for cobras in an attempt to remedy the cobra population plaguing the country. This worked very well, as the population went down, but then some individuals saw an opportunity and began “farming cobras” in attempt to make money. The government catches onto this black market and shuts down the bounty system….which in turns sparks these “farmers” to release all of these cobras back into the wild, since they are not worth anything anymore. Thus, the story ends with a higher population of cobras in the wild than before the bounty policy was introduced.

Now, back to our testing example. What if the athlete, being trained towards the test and knowing the criteria with which he/she will be graded, changes how they perform on the test in order to pass the test?

…Whew. It’s like inception, but without Leo and Ellen.

This is a real problem, and not just in lower extremity testing for post-operative return to sport readiness. It can underpin our decision-making and undermine our good intentions. So how do we make amends for this?

Meet “Dunning-Kreuger.”

The Dunning-Kreuger Effect, which is one of my absolute favorite principles, is a very eloquent representation of experience and perceived intelligence regarding a topic area or subject matter. It is “a cognitive bias in which people mistakenly assess their cognitive ability as greater than it is.”

How does this mesh into our topic? Well, if a person is doing all of these things listed above, training an individual with the test and giving them an answer key, but doing so under the pretenses of preparation and readiness without knowing better, can they really be to blame? If the person is unaware or lacks complete understanding, then it cannot be their bad intention, right? And now we’re right back to where we started.

I wanted to share this as something to chew on a bit and I am really curious to hear your thoughts. No, I am not discrediting hop testing or progressions of hopping or hop training by any means! I use them and I believe it helps in a variety of areas, but maybe we can think of different ways to achieve both improvements of function and performance, but also saving the quality of the assessment?

My opinion is we need more constrained testing, which removes the ability to cheat and or circumvent the criteria of the test. If there is only one way to complete the test, and that test measures what we want, then train away! But hop testing allows for multiple options for completion, so we are not quite sure what we are testing. Teaching to a test simply makes you better at completing the task. It’s a problem and solution-based mentality.

I hope this inspires a bit of reflection and critical thinking, and maybe next time you will consider these concepts when “testing or teaching” your clients!

Thanks for reading.